Speech processing hierarchy in the dog brain

Dog brains, just as human brains, process speech hierarchically: intonations at lower, word meanings at higher stages, according to a new study by Hungarian researchers at the Department of Ethology, Faculty of Science, Eötvös Loránd University (ELTE) using functional MRI on awake dogs. The study, which reveals exciting speech processing similarities between us and a speechless species, will be published in Scientific Reports.

Humans keep talking to dogs whose sensitivity to human communicative signs is well known. Both the words what we say and the intonation how we say them carry information for them. For example, when we tell ‘sit’ many dogs can sit down. Similarly, when we praise dogs with a high toned voice, they may notice the positive intent. We know very little, however, on what is going on in their brains during these.

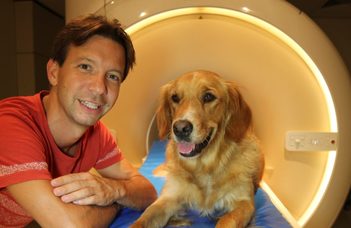

In this study, Hungarian researchers measured awake, cooperative dogs’ brain activity via functional magnetic resonance imaging (fMRI). Dogs listened to known, praise words (clever, well done, that’s it) and unknown, neutral words (such, as if, yet) both in praising and neutral intonation.

“Exploring speech processing similarities and differences between dog and human brains can help a lot in understanding the steps that led to the emergence of speech during evolution. Human brains process speech hierarchically: first, intonations at lower-, next, word meanings at higher stages. Some years ago, we discovered that dog brains, just as human brains, separate intonation and word meaning. But is the hierarchy also similar? To find it out, we used a special technique this time: we measured how dog brain activity decreases to repeatedly played stimuli. During brain scanning, sometimes we repeated words, sometimes intonations. Stronger decrease in a given brain region to certain repetitions shows the region’s involvement” – Anna Gábor, postdoctoral researcher at the MTA-ELTE ‘Lendület’ Neuroethology of Communication Research Group, lead author of the study explains.

The results show that dog brains, just like human brains, process speech hierarchically: intonation at lower stages (mostly in subcortical regions), while known words at higher stages (in cortical regions). Interestingly, older dogs distinguished words less than younger dogs.

“Although speech processing in humans is unique in many aspects, this study revealed exciting similarities between us and a speechless species. The similarity does not imply, however, that this hierarchy evolved for speech processing” – says Attila Andics, principal investigator of the MTA-ELTE ‘Lendület’ Neuroethology of Communication Research Group. “Instead, the hierarchy following intonation and word meaning processing reported here and also in humans may reflect a more general, not speech-specific processing principle. Simpler, emotionally loaded cues (such as intonation) are typically analysed at lower stages; while more complex, learnt cues (such as word meaning) are analysed at higher stages in multiple species. What our results really shed light on is that human speech processing may also follow this more basic, more general hierarchy.”

This study was published in Scientific Reports titled “Multilevel fMRI adaptation for spoken word processing in the awake dog brain”, written by Anna Gábor, Márta Gácsi, Dóra Szabó, Ádám Miklósi, Enikő Kubinyi and Attila Andics. This research was funded by the Hungarian Academy of Sciences (’Lendület’ Program), the European Research Council (ERC), the Ministry of Human Capacities, the Hungarian Scientific Research Fund and the Eötvös Loránd University (ELTE).

Video abstract about the research:

Link to the video abstract: https://www.youtube.com/watch?v=9EhI80fdEbw&feature=youtu.be

Link to the original publication: https://www.nature.com/articles/s41598-020-68821-6